# Introduction

Why do you use Python? For a lot of people it comes down to “just because,” but it really shouldn’t. Python is a powerful, general-purpose programming language with a simple syntax highlighted by the Pythonic approaches to managing logic and data, that just happens to have found itself the go-to languages of data science, machine learning and AI precisely for these reasons. It’s easy to pick up Python, but you can spend many years working to improve your skills and master the core mechanisms of the language, working to transition from a beginner to a professional who is able to write efficient, maintainable systems.

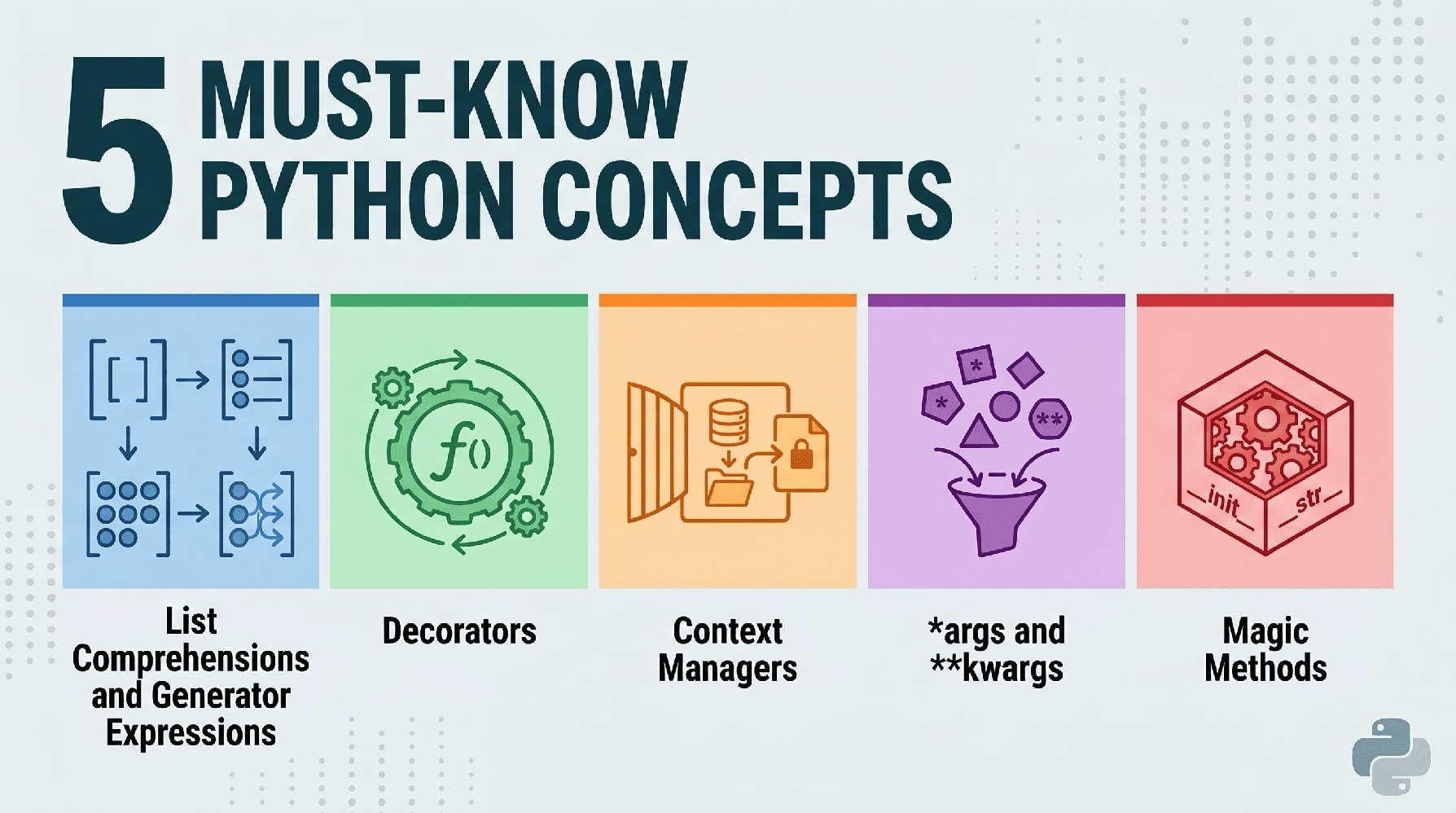

With this in mind, today we will explore five fundamental concepts that every Python developer should have in their toolkit.

# 1. List Comprehensions and Generator Expressions

Python is famous for its readability. List comprehensions allow you to replace clunky loops with a single line of code. However, the real pro move here is knowing when to use a generator expression instead to save memory.

// The Clunky Way (For Loop)

Let’s start with the inefficient, non-Pythonic “clunky” way of doing things:

numbers = range(1000000)

squared_list = []

for n in numbers:

if n % 2 == 0:

squared_list.append(n ** 2)

// The Pythonic Way (List Comprehension)

Now let’s take a look at the Pythonic way of solving the same task:

# Concise and faster execution

squared_list = [n ** 2 for n in numbers if n % 2 == 0]

# The "Must-Know" Twist: Generator Expressions

# If you only need to iterate once and don't need the whole list in memory:

squared_gen = (n ** 2 for n in numbers if n % 2 == 0)

Output:

List size: 4,167,352 bytes

Generator size: 200 bytes

Here’s why this is important, beyond people telling you “that’s how it’s done in Python”: List comprehensions are faster than .append(). Generator expressions (using parentheses) are “lazy” — they produce items one at a time, allowing you to process massive datasets without exhausting your system’s memory.

Let’s see how to use the generator, one call at a time, using a generator expression:

numbers = range(1000000)

squared_gen = (n ** 2 for n in numbers if n % 2 == 0)

# Values are computed only when requested, not all at once

print(next(squared_gen))

print(next(squared_gen))

print(next(squared_gen))

Output:

# 2. Decorators

Decorators are a way to modify the behavior of a function or class without permanently changing its source code. Think of them as wrappers around other functions.

// The Clunky Way

If you wanted to log how long several different functions took to run, you might manually add timing code to every single function.

import time

def process_data():

start = time.time()

# ... function logic ...

end = time.time()

print(f"process_data took {end - start:.4f}s")

def train_model():

start = time.time()

# ... function logic ...

end = time.time()

print(f"train_model took {end - start:.4f}s")

def generate_report():

start = time.time()

# ... function logic ...

end = time.time()

print(f"generate_report took {end - start:.4f}s")

Note that the repetition makes the problem obvious: the same four lines duplicated in every function. Let’s see how a decorator function can fix this.

// The Pythonic Way

Here’s a more Pythonic approach to this task.

import time

from functools import wraps

def timer_decorator(func):

@wraps(func)

def wrapper(*args, **kwargs):

start = time.time()

result = func(*args, **kwargs)

end = time.time()

print(f"{func.__name__} took {end - start:.4f}s")

return result

return wrapper

@timer_decorator

def heavy_computation():

return sum(range(10**7))

heavy_computation()

Output:

heavy_computation took 0.0941s

See how the timer_decorator() “wraps” the heavy_computation() function, and when the latter is called, it is subsumed by, and experiences the benefits of, the former.

Decorators promote the “don’t repeat yourself (DRY) principle. They are essential for logging, authentication, and caching in production environments.

# 3. Context Managers (with Statements)

Managing resources like files, database connections, or network sockets is a common source of bugs. If you forget to close a file, you leak memory or lock the file from other processes.

// The Clunky Way

Here we open a file, use, it and force a close when it’s no longer needed.

f = open("data.txt", "w")

try:

f.write("Hello World")

finally:

# Easy to forget!

f.close()

// The Pythonic Way

A with statement would help us with the above.

# File is automatically closed here, even if an error occurs

with open("data.txt", "w") as f:

f.write("Hello World")

Not only is it more concise, the logic is more straightforward and easier to follow as well — plus you get the easily-forgotten close() for free, as “setup” and “teardown” happen reliably. In terms of data tasks, this is useful when connecting to SQL databases or handling large input/output (IO)-bound tasks.

# 4. Mastering *args and **kwargs

Sometimes you don’t know how many arguments will be passed to a function. Python handles this elegantly using “packing” operators. Even as a beginner who may not have employed them, you have undoubtedly seen these “packing” operators at some point.

// The Pythonic Example

Here is the Pythonic way to handle:

*args(non-keyword arguments): A “packing” operator collecting extra positional arguments into a tuple. This is used for when you don’t know how many items will be passed to a function.**kwargs(keyword arguments): A “packing” operator collecting extra named arguments into a dictionary. This is used for optional settings or named parameters.

def make_profile(name, *tags, **metadata):

# name is the named argument

print(f"User: {name}")

# tags is a tuple

print(f"Tags: {tags}")

# metadata is a dictionary

print(f"Details: {metadata}")

make_profile("Alice", "DataScientist", "Pythonist", location="NY", seniority="Senior")

Output:

User: Alice

Tags: ('DataScientist', 'Pythonist')

Details: {'location': 'NY', 'seniority': 'Senior'}

This is the secret behind flexible libraries like Scikit-Learn or Matplotlib. It allows you to pass an arbitrary number of configuration settings into a function, making your code incredibly adaptable to changing requirements.

# 5. Dunder Methods (Magic Methods)

“Dunder” stands for double underscore (e.g. __init__). Officially special methods (but more often referred to as magic methods), these methods allow your custom objects to emulate built-in Python behavior.

// The Pythonic Way

Let’s see how to use magic methods to get automatic behavior added to our classes.

class Dataset:

def __init__(self, data):

self.data = data

def __len__(self):

return len(self.data)

def __str__(self):

return f"Dataset with {len(self.data)} items"

# Create a dataset instance

my_data = Dataset([1, 2, 3])

# Calls __len__

print(len(my_data))

# Calss __str__

print(my_data)

Output:

By using the built-in __len__ and __str__ dunders, our custom class gets some useful functionality for free.

Dunder methods are the backbone of the Python object protocol. By implementing methods like __getitem__ or __call__, you can make your classes behave like lists, dictionaries, or even functions, leading to much more intuitive APIs.

# Wrapping Up

Mastering these five concepts marks the transition from writing scripts to building software. By utilizing list comprehensions for speed, decorators for clean logic, context managers for safety, *args/**kwargs for flexibility, and dunder methods for object power, you are setting the foundation upon which you can build further Python expertise.

Matthew Mayo (@mattmayo13) holds a master’s degree in computer science and a graduate diploma in data mining. As managing editor of KDnuggets & Statology, and contributing editor at Machine Learning Mastery, Matthew aims to make complex data science concepts accessible. His professional interests include natural language processing, language models, machine learning algorithms, and exploring emerging AI. He is driven by a mission to democratize knowledge in the data science community. Matthew has been coding since he was 6 years old.