# Introduction

Anyone who has spent a fair amount of time doing data science may sooner or later learn something: the golden rule of downstream machine learning modeling, known as garbage in, garbage out (GIGO).

For example, feeding a linear regression model with highly collinear data, or running ANOVA tests on heteroscedastic variances, is the perfect recipe… for ineffective models that won’t learn properly.

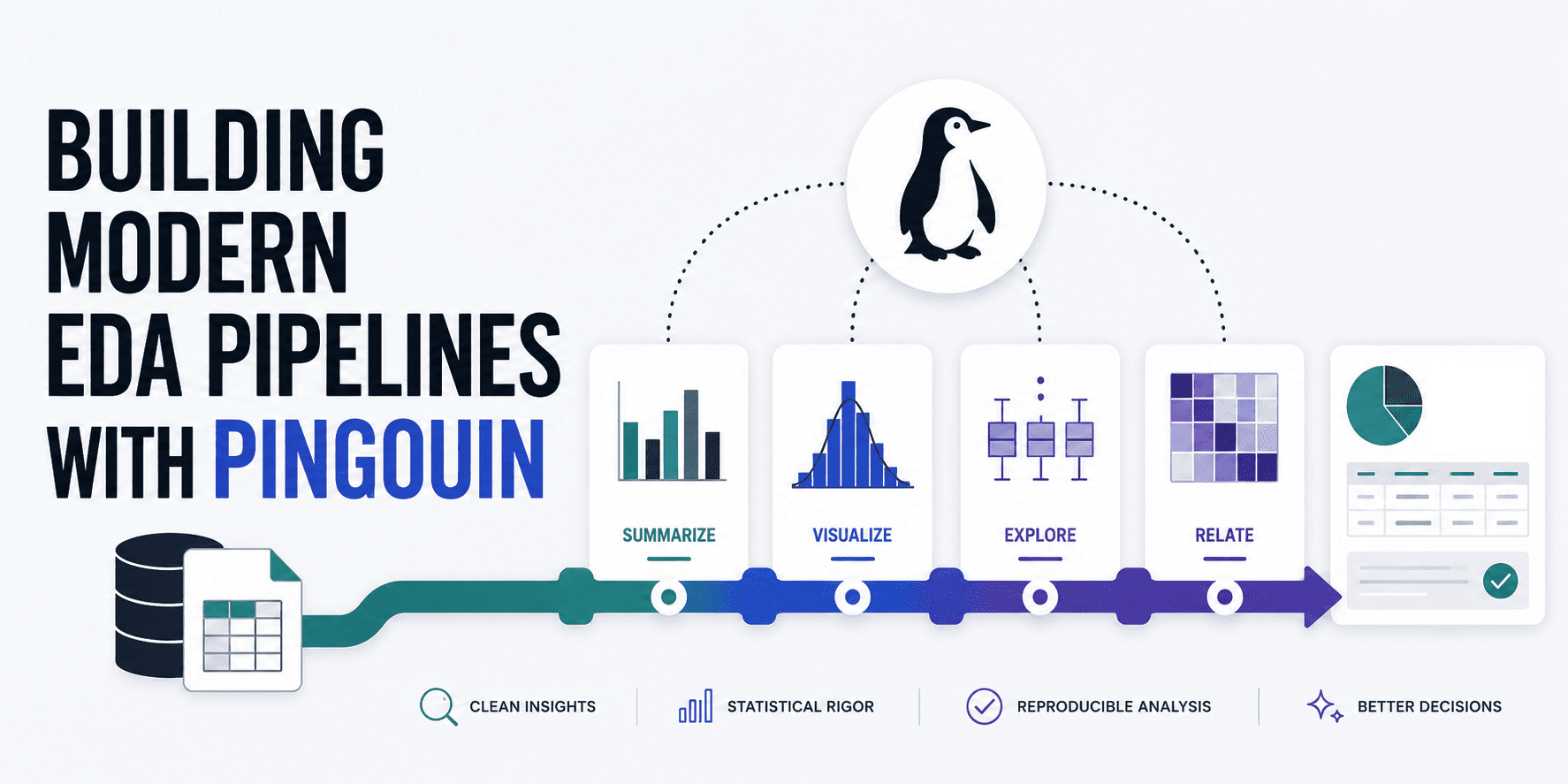

Exploratory data analysis (EDA) has a lot to say in terms of visualizations like scatter plots and histograms, yet they aren’t sufficient when we need rigorous validation of data against the mathematical assumptions needed in downstream analyses or models. Pingouin helps do this by bridging the gap between two well-known libraries in data science and statistics: SciPy and pandas. Further, it can be a great ally to build solid, automated EDA pipelines. This article teaches you how to build a holistic pipeline for rigorous, statistical EDA, validating several important data properties.

# Initial Setup

Let’s start by making sure we install Pingouin in our Python environment (and pandas, in case you don’t have it yet):

!pip install pingouin pandas

After that, it’s time to import these key libraries and load our data. As an example open dataset, we will use one containing samples of wine properties and their quality.

import pandas as pd

import pingouin as pg

# Loading the wine dataset from an open dataset GitHub repository

url = "https://raw.githubusercontent.com/gakudo-ai/open-datasets/refs/heads/main/wine-quality-white-and-red.csv"

df = pd.read_csv(url)

# Displaying the first few rows to understand our features

df.head()

# Checking Univariate Normality

The first of the specific exploratory analyses we will conduct pertains to a check on univariate normality. Many traditional algorithms for training machine learning models — and statistical tests like ANOVAs and t-tests, for that matter — need the assumption that continuous variables follow a normal, a.k.a. Gaussian distribution. Pingouin’s pg.normality() function helps do this check through a Shapiro-Wilk test across the entire dataframe:

# Selecting a subset of continuous features for normality checks

features = ['fixed acidity', 'volatile acidity', 'citric acid', 'pH', 'alcohol']

# Running the normality test

normality_results = pg.normality(df[features])

print(normality_results)

Output:

W pval normal

fixed acidity 0.879789 2.437973e-57 False

volatile acidity 0.875867 6.255995e-58 False

citric acid 0.964977 5.262332e-37 False

pH 0.991448 2.204049e-19 False

alcohol 0.953532 2.918847e-41 False

It seems like none of the numeric features at hand satisfy normality. This is by no means something wrong with the data; it’s simply part of its traits. We are just getting the message that, in later data preprocessing steps beyond our EDA, we might want to consider applying data transformations like log-transform or Box-Cox that make the raw data look “more normal-like” and thus more suitable for models that assume normality.

# Checking Multivariate Normality

Similarly, evaluating normality not feature by feature, but accounting for the interaction between features, is another interesting aspect to inspect. Let’s see how to check for multivariate normality: a key requirement in techniques like multivariate ANOVA (MANOVA), for instance.

# Henze-Zirkler multivariate normality test

multivariate_normality_results = pg.multivariate_normality(df[features])

print(multivariate_normality_results)

Output:

HZResults(hz=np.float64(23.72107048442373), pval=np.float64(0.0), normal=False)

And guess what: you may get something like HZResults(hz=np.float64(23.72107048442373), pval=np.float64(0.0), normal=False), which means multivariate normality doesn’t hold either. If you are going to train a machine learning model on this dataset, this means non-parametric, tree-based models like gradient boosting and random forests might be a more robust alternative than parametric, weight-based models like SVM, linear regression, and so on.

# Checking Homoscedasticity

Next comes a tricky word for a rather simple concept: homoscedasticity. This refers to equal or constant variance across errors in predictions, and it is interpreted as a measure of reliability. We will test this property (sorry, too hard to write its name again!) with Pingouin’s implementation of Levene’s test, as follows:

# Levene's test for equal variances across groups

# 'dv' is the target, dependent variable, 'group' is the categorical variable

homoscedasticity_results = pg.homoscedasticity(data=df, dv='alcohol', group='quality')

print(homoscedasticity_results)

Result:

W pval equal_var

levene 66.338684 2.317649e-80 False

Since we got False once again, we have a so-called heteroscedasticity problem, which should be accounted for in downstream analyses. One possible way could be by employing robust standard errors when training regression models.

# Checking Sphericity

Another statistical property to analyze is sphericity, which identifies whether the variances of differences between possible pairwise combinations of conditions are equal. Testing this property is usually desirable before running principal component analysis (PCA) for dimensionality reduction, as it helps us understand whether there are correlations between variables. PCA will be rendered rather useless in case there are not any:

# Mauchly's sphericity test

sphericity_results = pg.sphericity(df[features])

print(sphericity_results)

Result:

SpherResults(spher=False, W=np.float64(0.004437706589942777), chi2=np.float64(35184.26583883276), dof=9, pval=np.float64(0.0))

Looks like we have chosen a pretty indomitable, arid dataset! But fear not — this article is intentionally designed to focus on the EDA process and help you identify plenty of data issues like these. At the end of the day, detecting them and knowing what to do about them before downstream, machine learning analysis is far better than building a potentially flawed model. In this case, there is a catch: we have a p-value of 0.0, which means the null hypothesis of an identity correlation matrix is rejected, i.e. meaningful correlations exist between the variables. So if we had plenty of features and wanted to reduce dimensionality, applying PCA might be a good idea.

# Checking Multicollinearity

Last, we will check multicollinearity: a property that indicates whether there are highly correlated predictors. This might become, at some point, an undesirable property in interpretable models like linear regressors. Let’s check it:

# Calculating a robust correlation matrix with p-values

correlation_matrix = pg.rcorr(df[features], method='pearson')

print(correlation_matrix)

Output matrix:

fixed acidity volatile acidity citric acid pH alcohol

fixed acidity - *** *** *** ***

volatile acidity 0.219 - *** *** **

citric acid 0.324 -0.378 - ***

pH -0.253 0.261 -0.33 - ***

alcohol -0.095 -0.038 -0.01 0.121 -

While pandas’ corr() can also be used, Pingouin’s counterpart uses asterisks to indicate the statistical significance level of each correlation (* for p < 0.05, ** for p < 0.01, and *** for p < 0.001). A correlation can be statistically significant yet still small in magnitude — multicollinearity becomes a concern when the absolute value of the correlation is high (typically above 0.8). In our case, none of the pairwise correlations are dangerously large, with all five evaluated features providing largely non-overlapping, unique information of their own for further analyses.

# Wrapping Up

Through a series of examples applied and explained one by one, we have seen how to unleash the potential of Pingouin, an open-source Python library, to perform robust, modern EDA pipelines that help you make better decisions in data preprocessing and downstream analyses based on advanced statistical tests or machine learning models, helping you choose the right actions to perform and the right models to use.

Iván Palomares Carrascosa is a leader, writer, speaker, and adviser in AI, machine learning, deep learning & LLMs. He trains and guides others in harnessing AI in the real world.